This is a practical view for enterprise technical learning leaders who want training that actually changes how people work.

Technical training has a bad habit of drifting into feature tours and long explanations of how a system works. It’s easy for teams to walk away feeling informed but not necessarily more capable. And when the pressure is on to improve uptime, speed up adoption, or reduce mistakes, “feeling informed” won’t move the metrics that matter.

In other words, information is not learning.

More content is not the way to more learning or better performance.

The good news is that aligning training with real business performance isn’t complicated. It just requires starting in a different place and putting in the work that’s not related to creating content. It’s about focusing on the work people actually do. That’s the foundation behind how we build technical training at techstructional, and it’s what this guide helps you with.

Start With What the Business Is Trying to Improve

It’s tempting to begin by opening a tool, listing features, or gathering content from SMEs. But the real starting point is asking one simple question: What outcome are we trying to improve?

Most IT departments already have clear goals set for their projects. If they don’t, then they won’t be very successful in their technology implementations.

They may have goals such as increasing system reliability, reducing ticket volume, improving security behavior, or speeding up the adoption of a new platform. The trick is connecting training to the moments in everyday work that influence those numbers.

That’s where alignment begins.

If your CIO, VPs in IT, or director of support is tracking it on their dashboard, it’s worth asking how training influences it.

Define Metrics You Can Actually Influence

Once you understand the goal, the next step is choosing the part of it that training can realistically impact and should impact. Training can’t fix poor tools or broken processes, but it can change how well people follow the workflow, how confident they feel using new tools, and how consistently they avoid common mistakes.

When you anchor the training to a behavior tied to a metric, everything becomes clearer.

While a course that ensures employees have the basic knowledge for using a new system, it should be tied in some realistic way to the work. That means there are goals for what they need to know out of the gate and how it ties to their job.

Without the right goals, training can’t succeed.

It’s not about using the new tool; it’s about using it effectively to do a job. What are the metrics for what employees should come away with after being trained?

Look at the Workflow, Not Just the Skill

The fastest way to find out what people need isn’t a full-scale analysis; it’s simply watching how the work gets done. Most performance issues come from not understanding how a new tool works in their workflow because they learned vague features and how a tool works in general, but not tied to their actual work.

A quick way to uncover these moments is to talk with the teams doing the work, look at recent operational data, and shadow a mix of high performers and typical performers. The differences in how they approach tasks will show you where training can make the biggest impact.

We always look at new technology from the point of view of the work they need to perform. That’s all they need to know in the beginning. The most-used tasks that are essential to their day-to-day. Anything that’s not used every day or is a nice-to-know should be put in knowledge base articles or job aids only so they’re searchable.

Turn Insights Into Clear, Performance-Based Objectives

Once you know the workflow and the moments that matter, training/learning objectives (or as we like to refer to them, performance objectives) almost write themselves.

Instead of focusing on knowing or understanding, focus on doing.

For example, instead of “Understand how to use the CRM system,” a better objective might be:

Enable employees to accurately log customer issues in the CRM so follow‑ups are completed on time.

This kind of objective points directly to a metric that has already been determined and the behavior that influences it. Ensuring follow-ups are completed on time is likely an objective that’s already tracked, so use it.

Design Training That Looks Like Real Work

Real change happens when training mirrors the work people do every day. That’s why simulations, practice scenarios, and short task-based examples perform so much better than long videos or walkthroughs.

When training recreates the real workflow in a palatable format, people see exactly what to do and how to avoid mistakes. They also gain confidence, which has a measurable effect on performance. A simulation that guides a social worker through applying for a patient to get a transplant forces them to understand the process and helps them learn more than any demo ever could.

And when people practice the actual workflow, you get a direct path from training activity to business impact.

Support Performance in the Flow of Work

Training shouldn’t end when the module ends. The real opportunity is helping people in the moment they actually need it. Short job aids, checklists, knowledge base articles, and quick tips placed right inside the tool or workflow help close gaps that training alone can’t solve.

A 15-second reminder or an embedded quick reference can prevent the kind of mistakes that lead to outages or security issues. Sometimes that small nudge is what connects training back to the original business goal.

One way we help insert these performance helpers in the flow of work is through contextual help using a digital adoption platform (DAP).

Measure Results in a Way That Tells a Clear Story

Before launching training, capture a simple baseline. After launching, measure again. That doesn’t mean building a giant dashboard. It just needs to be enough to show whether the behavior, and therefore the metric, is improving.

For example, if training focused on diagnostic steps, you might look at:

- Whether those steps are used more consistently.

- Whether escalations drop for trained teams.

- Whether errors associated with missed steps decrease.

A clean before-and-after comparison gives leadership exactly what they need: a story that connects training to performance. Some of this data should already be baked into most digital transformations; it’s a matter of repurposing it for training and tapping into that business benefit IT is already adding to the organization.

Share Results In the Language Your Stakeholders Use

Reporting shouldn’t be a data dump. It should be a narrative that clearly shows the business problem, the training intervention, and the outcome.

A simple one‑page summary works best. Leaders care less about completions or views and more about what changed. If they see that training influenced uptime, accuracy, adoption, or ticket trends, the value becomes obvious.

Know When to Bring in External Help

Some situations call for support beyond what internal teams have time or resources for. When the system is complex, workflows are tightly connected, or performance gaps are already hurting the business, having a partner who can analyze workflows and build realistic technical simulations can accelerate results.

That’s where techstructional often comes in and how we help many of our clients. That’s particularly true when companies need simulation-based training based on a sustainable, measurable framework for technical training.

A Simple Approach You Can Reuse

Aligning technical training with performance goals doesn’t have to be complicated. The path looks like this:

Start with the business goals/outcomes → Find the workflow moments that influence it → Build realistic practice → Support the behavior in the workflow → Measure what changes → Share results in a simple story.

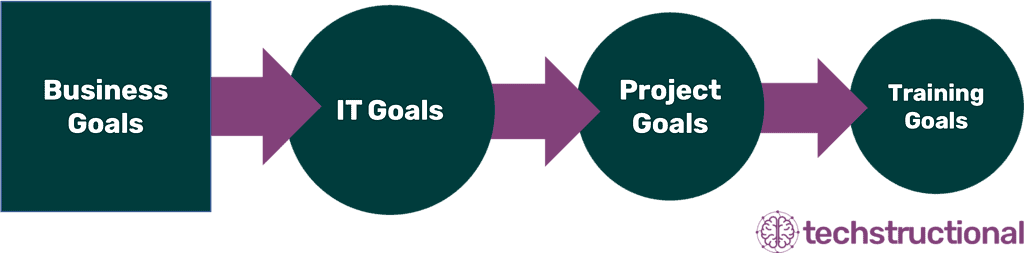

Below is an easy flow to figure out what the training or performance goals should be. Technology projects are there to cater to the businesses which has goals. So that means everything for training should flow seamlessly up to the business goals.

When you design training this way, it becomes less about content and more about capability. And capability always shows up in the metrics that matter.

If you want help mapping one of your current initiatives to this approach, or you want to explore what simulation-based technical training could look like for your team, you’re welcome to schedule a free consultation.